TOPICS

Contents

Containers Vs. Virtual Machines. 2

PACKAGING A CUSTOMIZED CONTAINER.. 8

EXPOSING OUR CONTAINER WITH PORT REDIRECTS. 9

INTRODUCTION TO DOCKER

Docker is part of production workflow and is mainly part of devops tool chain Docker is an open source project that automates the deployment of applications inside software containers, by providing additional layer of abstraction and automation of operating system level.

So basically it’s a tool that packages up a application and dependencies which are required to run the application in a “virtual container” so that i can run on any linux system or distribution.

There are a lot of reasons to use Docker. Although we will generally hear about Docker used in conjunction with development and deployment of application there are ton of examples for use:-

· Configuration Simplification

· Enhance Developer productivity

· Server consolidation and Management

Containers Vs. Virtual Machines

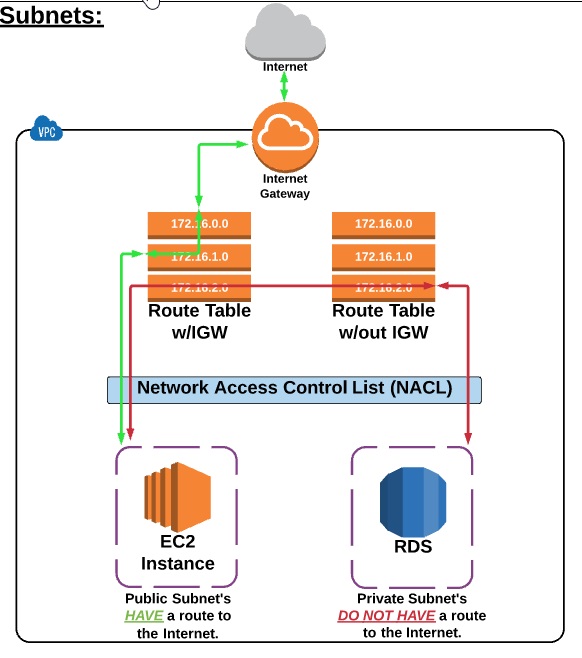

Virtual machine in short refered to as VM is an emulation of a specific computer system type. They operate based on the architecture and functions of that real computer. Its virtualization where it allows running of virtual OS under other OS but the virtual OS doesn’t communicate with the host OS directly it does it using hpervisor. An AWS intance is an example of virtual machine

Container is entirely the isolation and packaging of an application with its required dependecies which will be required to execute it independently and its completly independent of its surroundings and host operating system.

DOCKER ARCHITECTURE

Docker is a client-server application where both client and server can be on the same system or we can connect docker client with a remote docker daemon.

Client and the daemon(server) communicates using sockets or through RESTful

The main component of docker are:

i) Daemon

ii) Client

iii) Docker.io Registry

{+}https://newsroom.netapp.com/blogs/containers-vs-vms/+

Is Docker is the only player

No other companies are projects have been working on the concept of application virtualization for sometime:

I. FreeBSD – Jails

Ii. Sun/Oracle Solaris – Zones

Iii. Google – lmctfy (Lem me contain that for you )

Iv. OpenVZ

INSTALLING DOCKER

Os : EC2 – AMI

Commands to be used :-

- [root@ip-172-31-21-42 run]# sudo yum install -y docker

- [root@ip-172-31-21-42 run]# sudo service docker start

- [root@ip-172-31-21-42 run]# sudo service docker status

Command to make sure docker got installed properly

- [root@ip-172-31-21-42 run]# docker run hello-world

The Docker Hub

Docker hub we can consider as repository for our docker images from were we can pull and push our images to create our containers to start with we can just signup at

http://hub.docker.com

Creating Our First Image

Docker info

Creating Our First Image

We will pull docker ubuntu image with tag xenial

>> Docker pull ubuntu:xenial

>> docker images will give us the all available docker images

>> docker ps -a will give history of all the container with their status whether up or exited.

>> docker restart [containername] or [containerid]

>> docker inspect ubuntu:xenial its give all the details about the container

>> docker attach [containername]

>> docker run -itd ubuntu:xenial /bin/bash Using this command the container will run in background

>> docker inspect [containername] | grep IP

We can execute it on both up and stopped container

One container is independent of other container even its taken from same base image and data in one container is independent of data in another container.

PACKAGING A CUSTOMIZED CONTAINER

Any changes done under container can be carried forward it remains in that container but if we can to acheive it we can do it via two method

i) committing our changes under our container

ii) modifying and saving the dockerfile and building the image

Once we have done the changes under a container we can commit it using command >> docker commit -m “added version file for v1” -a “sanjeev” [containername] sanjeev/<<name>>:v1NEXT>>docker imagesWe should see the custom image

Now when we run container using custom image it should persist the data

USING DOCKERFILE

- Create a build dir and do vim Dockerfile

- enter some text and some sample run comnand as shown

- Next we can run the command >> docker build -t=”sanjeev/[containername]:v2″ .

EXPOSING OUR CONTAINER WITH PORT REDIRECTS

Is a process by which we can customize the port on which the container application running like suppose under the container tomcat is deployed on port number 8080 but we want to acces it using {+}http://localhost:9090/+ so for this we can use port redirect

>> docker run -d -p 8080:80 nginx:latest